It all comes down to training

05/27/2021 by Preligens

Satellite images that are used for Defense and Intelligence purposes are taken more than 600 kilometers above us. It is like taking a picture of the Eiffel tower from the top of Mont Blanc. Their resolution, number of pixels and size are therefore very different from pictures we would take with our smartphones and this is a real challenge for developing machine learning algorithms.

To exploit this tedious kind of data, and reach sky-rocketing detection and identification performances, input data is capital for the algorithm to get better and better but in the end, it all comes down to training.

In this article, we will explore how important training is to machine learning and how we manage to make our detections work even in the toughest cases, sometimes even better than the human eye.

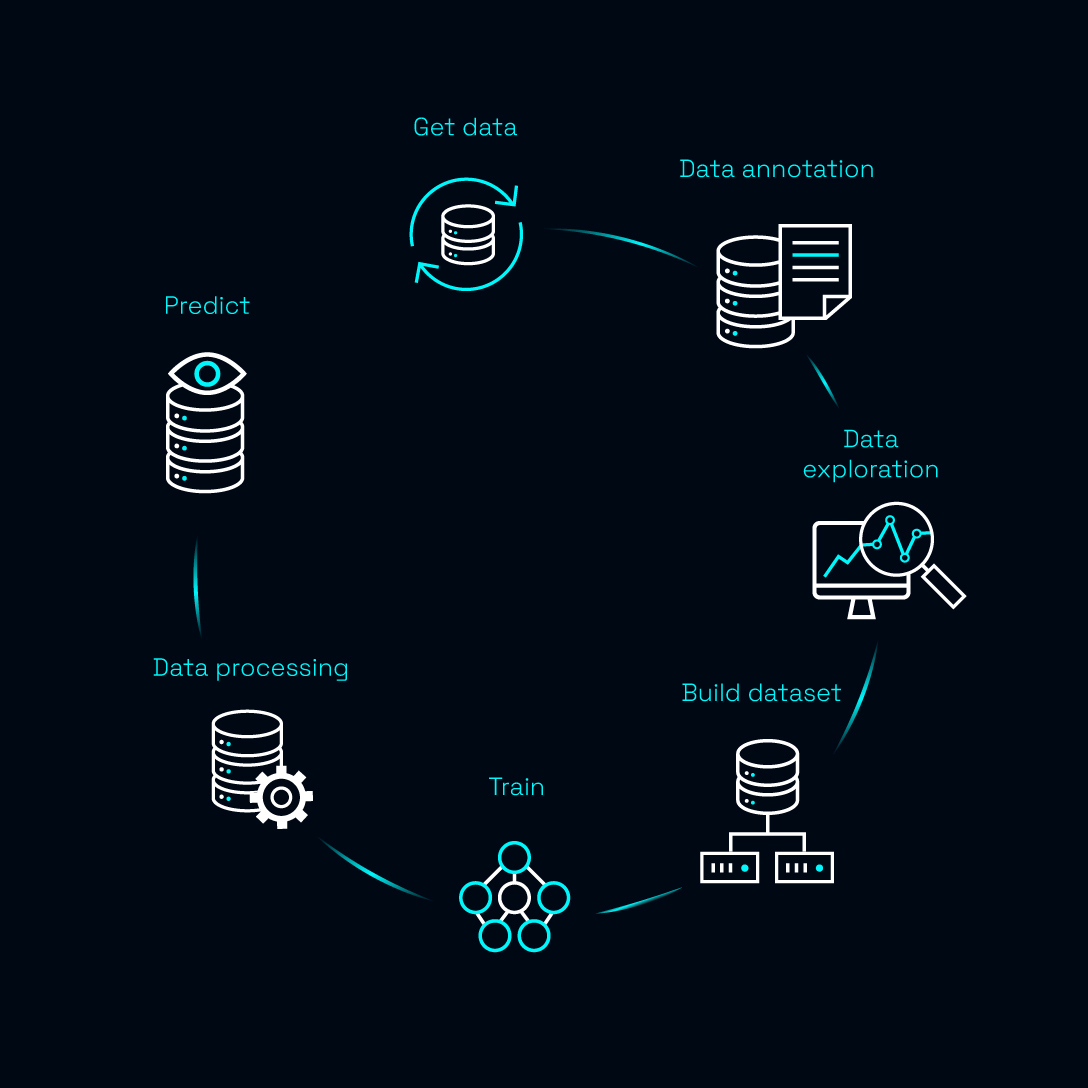

Training is the fifth step of the algorithm creation process. It is the step where state-of-the-art AI techniques are integrated into the architecture, in order to find the most performing model and reach unrivalled performances.

To find needles in haystacks

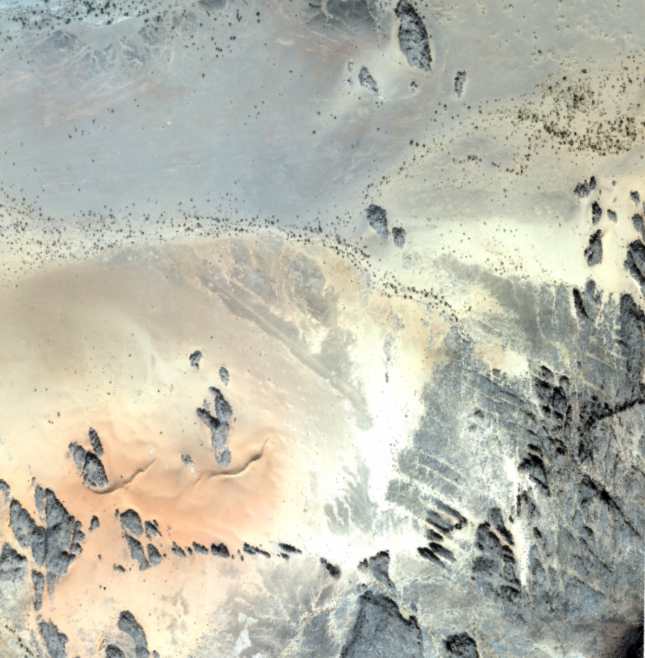

When applying AI to Defence and Intelligence use cases, trying to find needles in haystacks is a pretty standard use case to address. Covering hundreds of square meters at once, satellite images make great candidates to monitor a large area at a glance.

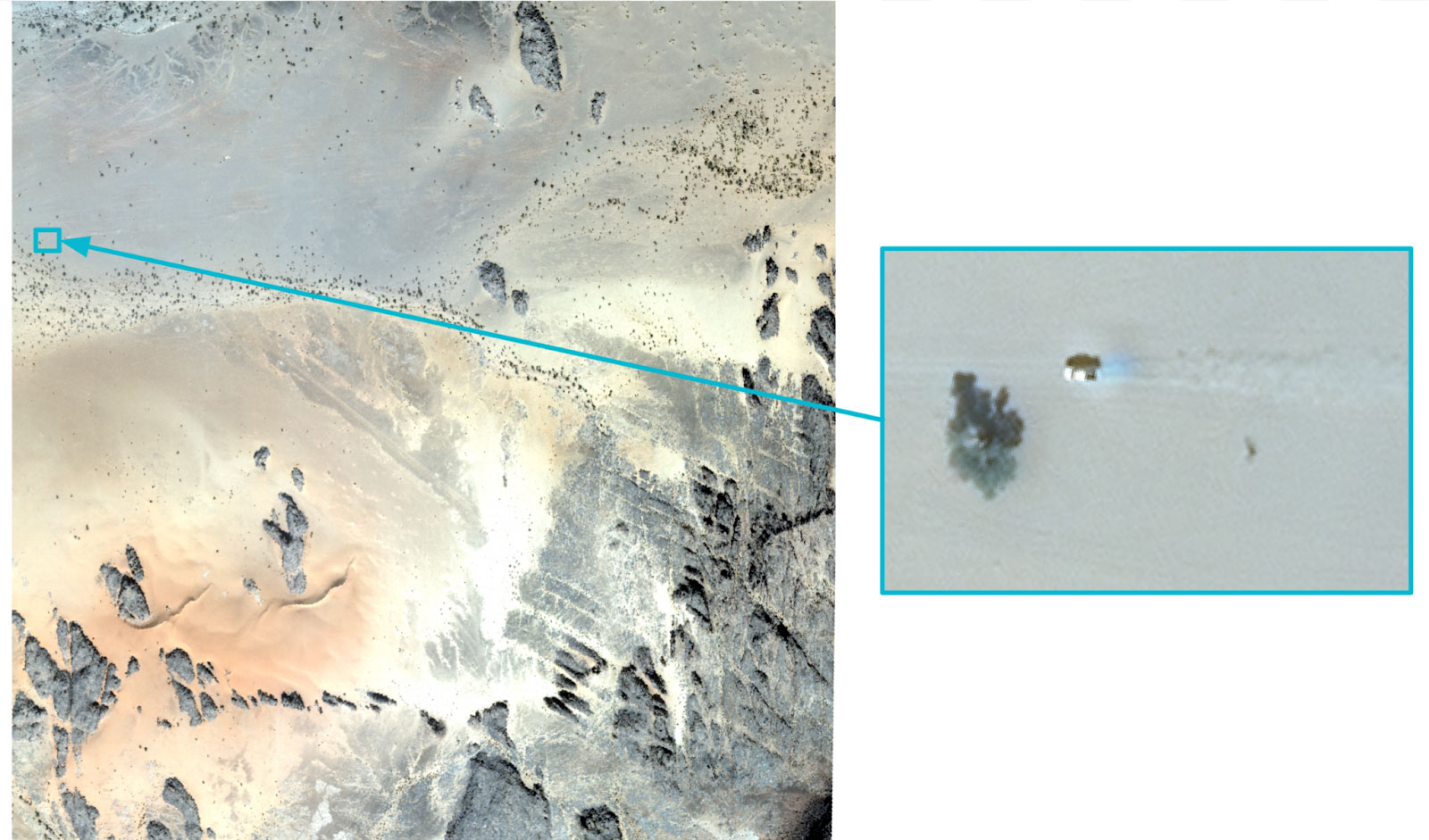

Let’s confront the human eye and the machine: can you spot the pick-up in this image? Hint: it is located near a tree.

Hard enough without a proper zoom for humans, yet the AI can detect the object of interest in a matter of seconds:

In order to differentiate the car amongst all rocks and trees, the vehicle detector must have been trained on a variety of landscapes.

The data science team at Preligens has developed approaches using advanced features to transform the context of a scene. This introduces more diversity into the training algorithms and in turn enhances their genericity. Style transfer is one of them.

Style transfer allows the recovery of the style of an image to restore it to any other image. Applied to Computer Vision, it consists in changing the background conditions of an image on which the algorithm is performing well, to train the latter to perform as well on the modified image. (e.g. modifying a vegetal background into a snowy one - see example below)

Example of style transfer applied to satellite images: switching the set up from vegetal to snowy - for snowy images are harder to come across

Or to perform better than any other on distinctive tough cases

When speaking of tough cases while analyzing satellite images, two situations arise:

- a lack of visibility on images, mostly due to climate conditions

- a lack of available examples to train the AI on, due to the scarcity of the object itself

The level of cloud cover is one of the first filters applied when downloading imagery from our providers. Indeed, the same way the eye of a satellite cannot see past the surface of water, it cannot see through fog or clouds.

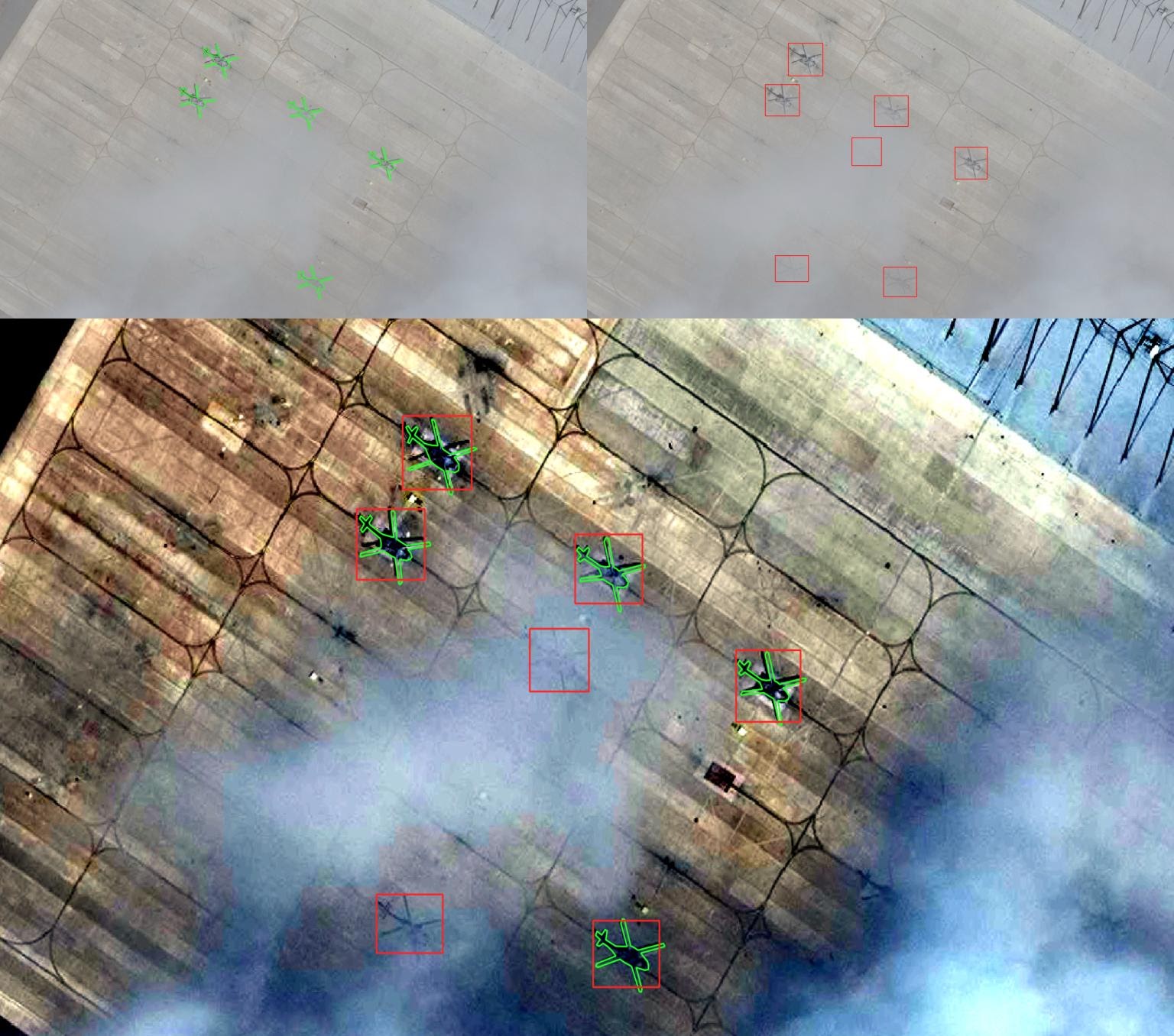

Yet, this is where Artificial Intelligence can overcome the human eye: if properly trained to recognize objects of interest, and specifically through bad climate conditions, the machine can then spot them even if humans can’t.

The groundtruth is in green here and predictions are represented by the red squares.

The final image, below, boosted by a strong histogram equalization reveals helicopters beneath clouds which were caught by our helicopter detector, yet might easily have been missed by annotators.

As stated previously, tough cases can also be a consequence of a lack of images to train on, due to a scarcity of the object of interest itself.

Data augmentation techniques exist to create a greater number of training examples when very few real examples are available: these techniques make use of random transformations applied to real data, thereby creating a broader diversity of examples.

The data science team at Preligens uses proprietary data generation technologies based on the latest generative and 3D techniques to feed the training data sets with simulated data. It enables them to cope with the scarcest use cases.

Example of the use of simulation - all aircraft have been manually added to this satellite image to grow the training database.

Additionally, a third technique called active learning can be leveraged to overcome corner cases.

Active learning is a semi-supervised technique used for prioritising the data which needs to be labelled in order to have the highest impact to train a supervised model.

Once our customers have seen the effectiveness of our application on general use cases, they ask us to identify objects that are more difficult to detect, hence active learning permits finding the few relevant images to train on.

Since not all labelled data brings with it the same value to the training process, our proprietary AI framework prioritizes those images that will maximize the training outcome, even on rarely seen objects.

Active learning has thus a double benefit: it increases training effectiveness, and enables Preligens AI to be more frugal, since Preligens can focus on the data that bring value to the detector and discard non valuable data.

The red zones correspond to the 'uncertainties' of the algorithm. This image is thus to be chosen for the training since it contains valuable information

Conclusion

In a nutshell, integrating state-of-the-art deep learning models to develop robust object identification algorithms ensures great performances. But in order to meet the level of requirement of Defence and Intelligence customers around the world,, a few more components at the cutting-edge of technology are required.

Depending on the need, Preligens data scientists pick amongst dozens of top of the art techniques to build the best performing models, in a highly flexible way.

Care to learn more about Preligens AI factory and how the training process integrates into our proprietary framework to develop our algorithms? Check out our dedicated page, and reach out to discuss our capabilities at [email protected]